on EU projects on verification&AI, in particular the ERC grant InOVationCS starting in June 2025.

The Learning in Verification group (LiVe Lab) focuses on the interactions of machine learning and verification. Our research includes explainable AI, verification of neural networks, stochastic games and control, probabilistic model checking, temporal logics (mainly LTL, PCTL), and automata theory. Our research is applicable in the tobotics, biomedical, and automotive domains. The team is distributed between the Masaryk University Brno, Czech Republic, and the Technical University of Munich, Germany.

Research Areas

Neural Network Safety

Runtime Monitoring of Neural Network

We develop runtime monitors for neural networks to improve their reliability.

Abstraction of Neural Network

We abstract neural networks to improve verification speed.

Automata and Logic

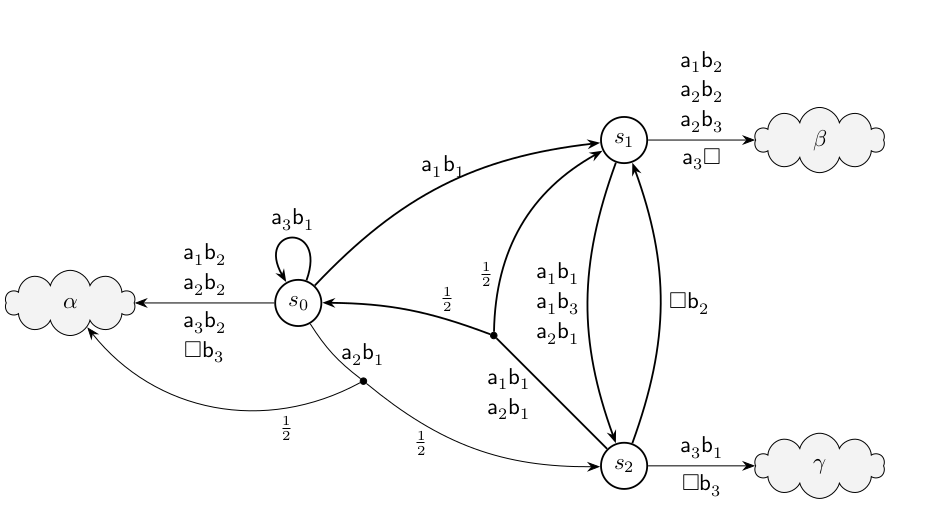

Machine Learning for LTL Synthesis

We apply machine learning to the LTL synthesis problem.

Translating LTL to Automata

We design algorithms for translating LTL to different types of automata.

Explainability

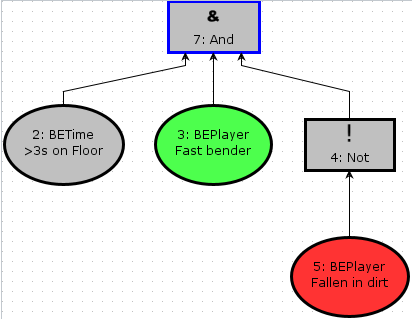

Explaining Controllers via Decision Trees

We develop methods for explaining controllers with decision trees.

Explaining Controllers via Automata

We develop methods for explaining controllers via automata.

Security Analysis via Learning and Model-Checking Attack-Defense Trees

Algorithms for Stochastic Games

Value Iteration for Stochastic Games

We develop value iteration algorithms for stochastic games.

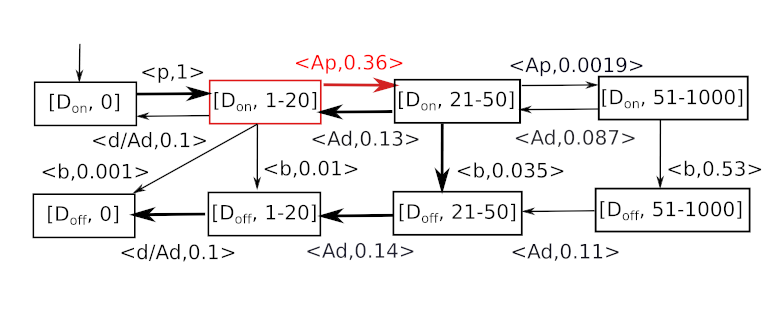

Concurrent Stochastic Games

We develop techniques for the verification of concurrent stochastic games which extend turn-based stochastic games by allowing players to select actions simultaneously in each state, reflecting more realistic scenarios of interactive agents acting concurrently.

Analysis of Probabilistic Systems

Tools

QuADTool

We provide a highly user-friendly tool for Synthesis of Cyber-security models based on the attack-tree concept. It also features interfaces to a variety of other tools and model-checkers, as well as built-in analysis for uncertain values. Additionally, the CLI can be used to learn attack-trees from logfiles or other traces.

SeQuaiA

This tool implements the semi-quantitative analysis of Chemical Reaction Networks.

SemML

In this project we develop learning-based exploration heuristics for LTL Synthesis that exploit the semantic labelling of the underlying Automaton/Game.

Owl

Owl is designed to help researches in formal methods to work with ω-words, ω-automata and LTL.

MONITIZER

We create a tool (Monitizer) that optimizes monitors on a NN for a specific task.

dtControl

Represent controllers as decision trees. Improve memory footprint, boost explainability while preserving guarantees.

Automata Tutor is an online teaching tool that aids instructors and students in large courses on automata and formal languages with many different exercise types.